Some 3000 indigenous Sámi people have dibs on around 40% of non-industrialised Norway to practice reindeer husbandry, sometimes to the annoyance of those among the 5.5 million Norwegians who would prefer to use the land for other purposes.

Norway’s energy directorate kicked off a bitter dispute by allowing 151 wind turbines to shoot up in a winter grazing area of two Sámi siidas, with the Supreme Court ruling it a human rights violation in 2021. The state dragged its feet, but after intense Sámi and activist protests outside Parliament in 2023, the state and wind energy companies agreed to pay compensation, reserve new grazing areas and grant the Sámis veto rights for extending the approved 25-year operation period of the wind farms.

What I found interesting was how most climate organisations and activists, like Greenpeace and Greta Thunberg, fell automatically in line with the Sámis. While there is no contradiction in holding both views, something is ideologically fishy about a climate change organisation weighing in firmly on the anti-renewable energy side over indigenous rights concerns. I’d expect effective and credible climate organisations to be focused on climate change, not be one arm of a political movement with a range of non-climate positions.

Then I looked around. Effective altruists do the same, not by accident, but by design. It’s usually a sign of political unhealth when you can reliably tell a person’s views on AI extinction risk from their position on malaria bednets. It’s weird to criticise the woke for turning pandemic response into an anti-racism exercise without seeing any problem with turning pro-social career guidance into a get everyone to fight AI x-risk jig.

Before we get into the weeds, I want to make something very clear: I’m not criticising the EA and rationalist projects, but the way they’ve been operationalised. Scott has an excellent post named Criticism of Criticism of Criticism in which he slams EA fetishism of big paradigmatic criticisms that have few practical consequences. I hope to avoid this by focusing on what EAs and rationalists actually do, and make practical suggestions. I’ll describe them interchangeably, sometimes jointly as EARs, as they face similar risks and are socially intertwined. These risks aren’t new to EAR thinkers, but the social movement is not organised to deal with them.

Theory meets politics

I used to see effective altruism as a question, not an ideology. Criticism of EA misses the point, I would say, because any honest criticism of EA is in fact part of the EA project to critically explore how to do most good.

But that itself is the ideology, not the movement. There’s now a global and connected EA ecosystem of researchers, lobbyists and funders, with its own culture, career paths, public squares and influencers. The EA question has a list of answers attached, and conference admissions filter for knowledge of these.

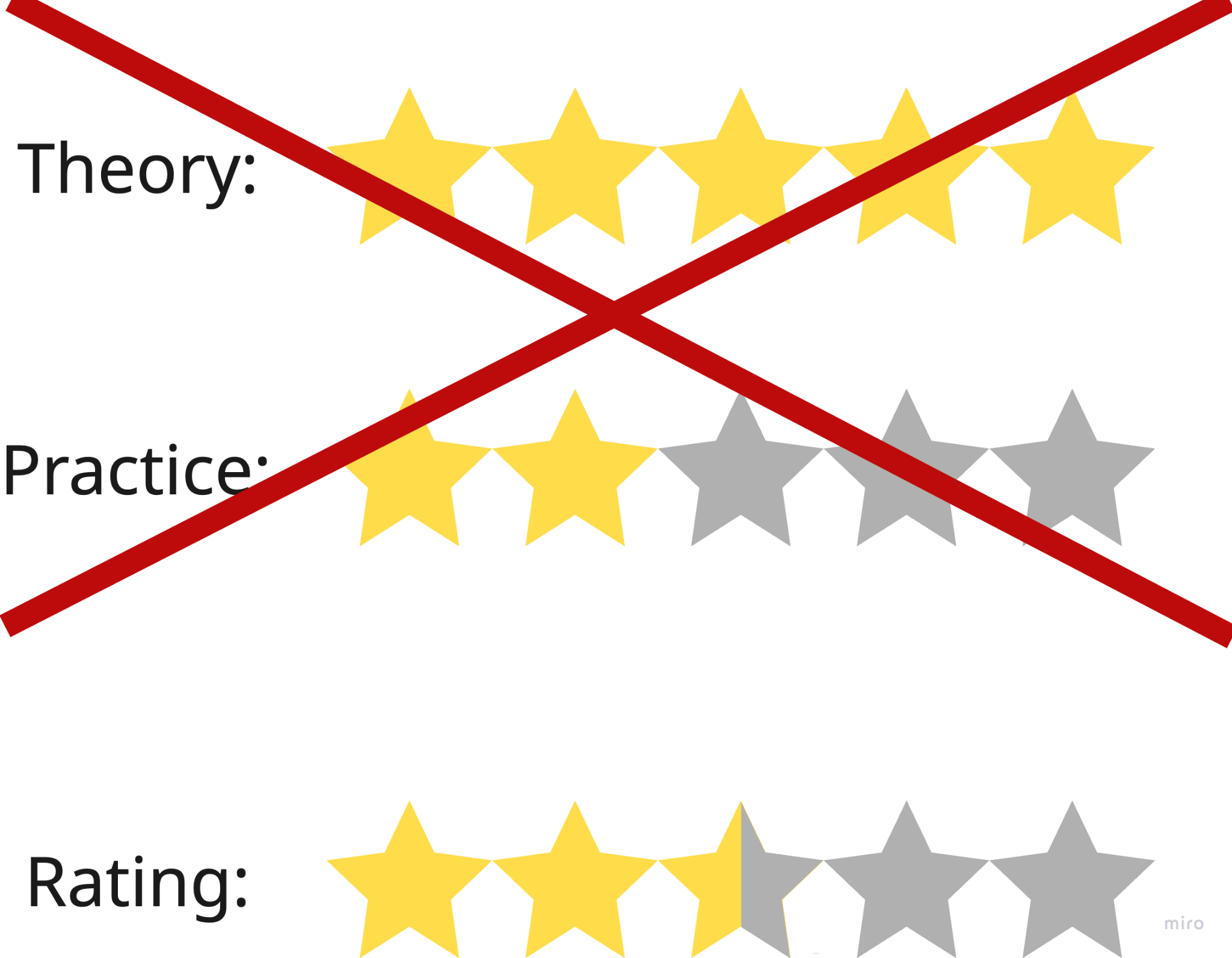

It’s a motte and bailey: EAs optimise for specific and sometimes highly speculative answers, but when pushed they frame these answers as completely tentative based on currently available evidence.

Here are four observations of EARs in action, mostly in the policy space:

- They are often more interested in and knowledgeable about EAR-specific ideas and research than the actual policy/research domains they work in and/or the institutions they want to influence.

- If they have a job in a non-EA organisation, they care more about leveraging the job to further agreed-upon EA policy goals than to do the job itself well.

- They target influential positions, and coordinate globally to bend society’s resources and institutions towards EA policy goals.

- They rarely read news, and can feel indignant over imprecise media coverage, even if the coverage is favourable and meets journalistic standards.

I don’t want to overstate these, but I’d be surprised if they don’t ring true to insiders. And they’re exactly the kind of behaviour that people hate woke/maga/etc for: ignoring shared liberal norms and institutions, and making everything about winning in politics.

The allure of objectivity

Grappling with contemporary WWII politics, Karl Popper singled out historicism (especially of Plato, Hegel and Marx) as the root of totalitarian ideologies:

the doctrine that history is controlled by specific historical or evolutionary laws whose discovery would enable us to prophesy the destiny of man.

A historicist’s big answers defeat all other values. Hard historicism sees current or new power structures as inevitable, and making it futile to fight or change them. Soft historicism knows the ultimate aim of society, and so will look to realise it:

The Utopian approach may be described as follows. Any rational action must have a certain aim. It is rational in the same degree as it pursues its aim consciously and consistently, and as it determines its means according to this end. To choose the end is therefore the first thing we have to do if we wish to act rationally; and we must be careful to determine our real or ultimate ends, from which we must distinguish clearly those intermediate or partial ends which actually are only means, or steps on the way, to the ultimate end. If we neglect this distinction, then we must also neglect to ask whether these partial ends are likely to promote the ultimate end, and accordingly, we must fail to act rationally.

These principles, if applied to the realm of political activity, demand that we must determine our ultimate political aim, or the Ideal State, before taking any practical action. Only when this ultimate aim is determined, in rough outline at least, only when we are in possession of something like a blueprint of the society at which we aim, only then can we begin to consider the best ways and means for its realization, and to draw up a plan for practical action. These are the necessary preliminaries of any practical political move that can be called rational, and especially of social engineering.

He contrasts the Utopian approach with “piecemeal” social engineering,

the method of searching for, and fighting against, the greatest and most urgent evils of society, rather than searching for, and fighting for, its greatest ultimate good.

…

In favour of his method, the piecemeal engineer can claim that a systematic fight against suffering and injustice and war is more likely to be supported by the approval and agreement of a great number of people than the fight for the establishment of some ideal. The existence of social evils, that is to say, of social conditions under which many men are suffering, can be comparatively well established. Those who suffer can judge for themselves, and the others can hardly deny that they would not like to change places.

It is infinitely more difficult to reason about an ideal society. Social life is so complicated that few men, or none at all, could judge a blueprint for social engineering on the grand scale; whether it be practicable; whether it would result in a real improvement; what kind of suffering it may involve; and what may be the means for its realization.

As opposed to this, blueprints for piecemeal engineering are comparatively simple. They are blueprints for single institutions, for health and unemployed insurance, for instance, or arbitration courts, or anti-depression budgeting, or educational reform. If they go wrong, the damage is not very great, and a re-adjustment not very difficult. They are less risky, and for this very reason less controversial. But if it is easier to reach a reasonable agreement about existing evils and the means of combating them than it is about an ideal good and the means of its realization, then there is also more hope that by using the piecemeal method we may get over the very greatest practical difficulty of all reasonable political reform, namely, the use of reason, instead of passion and violence, in executing the programme. There will be a possibility of reaching a reasonable compromise and therefore of achieving the improvement by democratic methods.

EA is open to both Utopian and piecemeal social engineers. I’ll argue that global health-type interventions fall squarely in the piecemeal approach, while x-risk is dangerously historicist-Utopian:

We live during the hinge of history. Given the scientific and technological discoveries of the last two centuries, the world has never changed as fast. We shall soon have even greater powers to transform, not only our surroundings, but ourselves and our successors.

This is Derek Parfit looking at the next centuries. Scott and others believe decisions even in the next two (!) years could doom or bless humanity forever. Popper again:

It is always flattering to belong to the inner circle of the initiated, and to possess the unusual power of predicting the course of history.

Critics have pointed out the totalitarianism implicit or explicit in the transhumanist/longtermist project. Naive utilitarianism is authoritarian, dangerously so with plus or minus infinity on one side of the ethics equation. But those critics mix descriptive and normative claims. Dangerous ideas can be true, and blocking our ears is no way to tackle important issues.

Crucially, Popper is making a pragmatic political point:

It is important to understand this criticism properly; I do not criticize the ideal by claiming that an ideal can never be realized, that it must always remain a Utopia. This would not be a valid criticism, for many things have been realized which have once been dogmatically declared to be unrealizable, for instance…

What I criticize under the name Utopian engineering recommends the reconstruction of society as a whole, i.e. very sweeping changes whose practical consequences are hard to calculate, owing to our limited experiences. It claims to plan rationally for the whole of society, although we do not possess anything like the factual knowledge which would be necessary to make good such an ambitious claim.

For Popper, the first priority must always be to protect the liberal institutions that uphold democracy. Kill them to implement a Big Idea, and you take away society’s ability to improve (possibly forever). Fight big ideas and play by the rules, and you help society incrementally advance towards a better world.

EA’s first-principles result-oriented thinking leaves no automatic respect for democratic processes or institutions. It goes back to the motte and bailey: The only-a-question ideology places your own political ambitions with the good and the true, exactly the historicist allure that foster disregard for democratic norms.

For global heath (and other hands-on areas) it’s ok because it's kind of true: Do the RCTs, then do what works best. For more speculative interventions, you have to build a case for higher expected QALYs than cash transfers and malaria nets. You measure the results, staying grounded in a reality feedback loop.

For politics, it’s cheating. If you have the good and true answers, you should optimise to implement them, and following the rules may hold you back. With the fate of the world at play, you should really stop at nothing to implement them, even if hard evidence is difficult to come by. Final warnings from Popper:

The Utopian engineer will have to be deaf to many complaints; in fact, it will be part of his business to suppress unreasonable objections. (He will say, like Lenin, ‘You can’t make an omelette without breaking eggs.’)

…

Those who prefer one step towards a distant ideal to the realization of a piecemeal compromise should always remember that if the ideal is very distant, it may even become difficult to say whether the step taken was towards or away from it.

Scott has noticed these skulls, but he speaks for the EAR elites. It’s easy to nod along, and then build a global crypto scam to save the world from AI. EAR puts the onus on the individual, but it’s easier to be a midwit than a genius when building your personal world model.

Sophistication risks

The paradox of rationalism is that each new insight is a trap.

Politically knowledgeable subjects, because they possess greater ammunition with which to counter-argue incongruent facts and arguments, will be more prone to the above [(dis-) confirmation] biases,

reads a classic 2006 study on politically motivated reasoning.

Cognitive biases are universal ammunition in this sense. Widespread and unbounded by topic, knowledge of reasoning flaws are, ironically, especially vulnerable to weaponisation.

2007-Yudkowsky argued this point in signature guru style:

The last time I gave a talk on heuristics and biases, I started out by introducing the general concept by way of the [weaponisable] conjunction fallacy and [weaponisable] representativeness heuristic. And then I moved on to confirmation bias, disconfirmation bias, sophisticated argument, motivated skepticism, and other attitude effects. I spent the next thirty minutes hammering on that theme, reintroducing it from as many different perspectives as I could.

…

Whether I do it on paper, or in speech, I now try to never mention calibration and overconfidence unless I have first talked about disconfirmation bias, motivated skepticism, sophisticated arguers, and dysrationalia in the mentally agile. First, do no harm!

I still feel like this undersells the problem. It’s amazingly thorny: The better you are at spotting errors, the more you know about the topic in question, and the more carefully you reason, the harder it gets. The more progress you make, the more elaborately your brain can fool you. Sophistication bias threatens to turn the whole rationalist project against itself.

Confirmation bias isn’t even needed, information asymmetries do the trick. I found Scott’s story about his Atlantis days instructive:

When I was a teenager I believed in a conspiracy theory. It was the one Graham Hancock wrote about in Fingerprints Of The Gods, sort of a modern update on the Atlantis story. It went something like this:

- Did you know that dozens of civilizations around the world have oddly similar legends about a lost continent that sunk under the waves? The Greeks called it Atlantis; the Aztecs, Atzlan; the Indonesians, Atala.

- Various ancient structures and artifacts appear to be older than generally believed. Geologists say that the erosion patterns on the Sphinx prove it must be at least 10,000 years old; some well-known ruins in South America have depictions of animals that have been extinct for at least 10,000 years.

- There are vast underwater ruins, pyramids and stuff. We know where they are! You can just learn to scuba dive and go see them! Historians just ignore them, or say they’re probably natural, but if you look at them, they’re obviously not natural.

Teenage me was impressed by these arguments. But he also had some good instincts and wanted to check to see what skeptics had to say in response. Here are what the skeptics had to say:

- “Haha, can you believe some people still think there was an Atlantis! Imagine how stupid you would have to be to fall for that scam!”

- “There is literally ZERO evidence for Atlantis. The ONLY reason you could ever believe it is because you’re a racist who thinks brown people couldn’t have built civilizations on their own.”

- “No mainstream historians believe in any of that. Do you think you’re smarter than all the world’s historians?”

Teenage Scott was wrong because he really tried to figure out the truth instead of trusting vibes in an information asymmetric landscape. Even while desperately seeking out good counterarguments, he found only vibe-based condescension on the anti-Atlantis side, with no plausible counter-explanations. He knew much more than anyone he could find that disagreed with him, yet he was wrong and went on a five-year wild-goose-chase before recovering.

Grown-up Scott told the story to defend why he spent 25,000 words to fight a similar ivermectin information asymmetry. I endorse that project. But notice the mixed moral of this story.

Rationalism is about winning, and the vibers won the Atlantis question. Not by argument, but they got it right. They will never be right when everyone is wrong, but isn’t it worse to be catastrophically wrong when everyone is right? Teenage Scott is right in theory, but in practice there’s a million more ways he can be wrong than the mainstream information market.

Error-correcting mechanisms can be invisible from the outside. Scientists have access to general knowledge and internal error-correcting mechanisms that filter what is worth investigating. If it’s not really worth looking into, then no one, or, in the worst case, only those lacking that knowledge, will write about the topic. Media relies on established scientists. When the system works, we’re left with an Atlantis-style asymmetry.

We shouldn’t blindly trust the mainstream, but it is an epistemic lifeline: Lose your grip, and pilot unanchored through an unbounded idea space, with no limit to how wrong you can be. Keep hold, and you can tentatively, carefully and selectively make targeted improvements.

Odd, then, that the rationalists shou

ld have such disdain for it.

Contrarianism

Grown-up Scott wrestles with when you can or can’t rely on experts in his Bounded Distrust-post.

There are times when experts and the establishment lie, but it’s not all the time. FOX will sometimes present news in a biased or misleading way, but they won’t make up news events that never happen. Experts will sometimes prevent studies they don’t like from happening, but they’re much less likely to flatly assert a clear, specific fact which isn’t true.

I think some people are able to figure out these rules and feel comfortable with them, and other people can’t and end up as conspiracy theorists.

I’m not blaming the second type of person. Figuring-out-the-rules-of-the-game is a hard skill, not everybody has it.

Ok, so if you don’t have it you should trust the mainstream, right?

If you don’t have it, then universal distrust might be a safer strategy than universal credulity.

What? This strikes me as a paranoid flattening of the landscape. Like putting cash under your bed instead of the stock market for fear of speculating. The market may be skewed, but index funds always win in the end. Scott continues:

Obviously the good solution is that people stop lying and presenting misleading information.

But I think it’s important for these two types of people to understand each other.

The people who lack this skill entirely think it’s crazy to listen to experts about anything at all. They correctly point out time after time that they’ve lied or screwed up, then ask “so why do you believe them on ivermectin?” or “so why do you believe them on global warming?” My answer - which I don’t think is an obvious or easy answer, it’s a bold claim that could be wrong, is “I think I have a good sense of the dynamics here, how far people will bend the truth, and what it looks like when they do”. I realize this is playing with fire. But listening to experts is a powerful enough hack for finding the truth that it’s worth going pretty far to try to rescue it.

He reversed my logic. Instead of seeing digression from the mainstream as risky, he sees trusting the mainstream as playing with fire: By default, you should distrust everything. But tentatively, carefully, and selectively you can trust the experts sometimes.

Scott’s media complaint is similarly flattening: He shows how mainstream and alternative media misdirection is similar, with no mention of the mainstream’s overwhelming incentive advantage of a diverse audience and lots of (correcting) competitors. Yet, he is the positive one. The comment sections fill over with intellectuals complaining about the media and scientific consensus, even criticising Scott for having too much faith in the establishment.

The percentage [of journalists] who are engaged in a disinterested search for the truth is close to zero.

Zvi gets the prize for most paranoid/arrogant. While headlines, according to Zvi, are allowed to lie, blatantly contradict the article’s content and generally lay out a Narrative the source wants you to believe, the news body:

Is often part of an implicit conspiracy to suppress true information or spread false information, without explicit denial of the true info or explicit claims of the false info.

His guide reeks of machismo intellectualism. Figure out everything yourself from original sources, don’t trust anyone. Or trust folks like Zvi to not trust anyone for you. As a journalist, I find his ramblings embarrassingly out of touch with how media and the information market works. The main driver of technical imprecision isn’t malice, it’s compressing the facts into something readers understand. Journalists have some great and some less great epistemic incentives, and compete with each other to square them best. Zvi is incentivised to give fancy and convincing-sounding hot takes, but receives limited real world feedback to ensures he isn't living in the clouds.

The rationalist criticism is ironic. The sophisticates who could have improved mainstream debate complain about the quality of public discourse in their own private forum. Instead of improving shared institutions, the rationalist response is to retreat cowardly into clubs of epistemic snobs that self-categorise as Scott’s figured-out-the-rules-of-the-game savvys. They stand on the sidelines of the court bragging about how well they can strike the ball.

The crux

Observations 1-4 describe a movement that mostly does its own research, then looks to bend society to its wishes. Nobody designed it this way, but unless I’ve had a very unrepresentative experience, it is the way it played out.

When I tried my own hand at politics, we quickly realised that not all EA slam dunks hold up in the real world. To demonstrate x-risk neglectedness, Toby Ord wrote in The Precipice that

The international body responsible for the continued prohibition of bioweapons (the Biological Weapons Convention [BWC]) has an annual budget of just $1.4 million—less than the average McDonald’s restaurant

But, as any domain expert will tell you, the real problem is geopolitical tension. There are obvious ways to improve the convention, like adding a verification protocol, but they require trust that currently doesn’t exist among the great powers. One can work to fix this, but it is hardly the hyper-neglected EA cause area Ord frames it as. “Strengthen the BWC” is the top 80k biorisk recommendation.

We learned the same lesson across the board. Workable policy advice was most dependent on understanding the existing institutions and domains, opposite of observation 1.

Moving aspiring intellectual do-gooders to isolated forums risks turning a positive force into a destructive one:

- The mainstream loses free-thinker insights and criticism. Discourse deteriorates and naive EAs who optimise their personal impact end up undermining the democratic institutions get rather than helping them.

- The free thinkers with good intentions risk losing touch with reality and being net negative for society. Their ideas and inventions don’t spread from the niche subculture. Rutger Bregman’s 2025 relaunch of the exact EA project as a revolutionary idea for the mainstream is testament to EA’s isolation.

Of course, there are still plenty of contact points, and the focus on rationality, uncertainty and criticism counteracts many risks. But the movements are too individualistic. It’s about how you personally can have accurate beliefs and influence the world. Scott wrote that you are playing with epistemological fire when you personally try to figure out who to trust, yet that is the whole rationalist morale.

The real solution is apolitical, society-scaled, self-correcting institutions.

Suggestions and questions

Here are seven ideas to rethink the EAR movements:

- Do no political harm and classical liberalism into the EA curriculum. Popper is good for the dangers, but Wait but Why-author Tim Urban’s What’s Our Problem is an excellent explanation of the principles. Yudkowsky has some decent warnings of why the ends do not justify means in practice. The system must ensure that individuals don’t end up with rich knowledge of dangerous ideas and poor awareness of the dangers.

- Read more news. Calibrate viewpoints as relative to mainstream science and discourse. I can’t believe this has to be argued. Be less online.

- Radical transparency of funding, activity, achievements etc to spread a healthy institutional norm, keep you in check and make you trustworthy for others. It also visualises what the movement does to facilitate spreading the ideas.

- Establish EAR auditing that keeps EAR institutions from being shady and doing harm.

- Nurture consensus-building institutions like prediction markets that derive or formalise insights, rather than leaving each individual to find their own way.

- Merge with mainstream institutions. Do joint research/projects, and redesign EAR institutions after the goal of strengthening the shared institutions of society.

- Debate the mainstream. Not as in make a propaganda video. As in, understand the actual debate to win the public narrative fairly. If there are systematic problems, then help fix them, don’t retreat to ivory towers.

Should EAG (and EAGx) exist? EA Global strengthens cross-cause area, within-EA connections, nurturing a network based on EA-affiliation rather than domain with the rest of society. The same applies for Less Wrong, 80,000 hours and the Effective Altruism Forum.

There’s a positive role to play for sophisticated forums. But if these split along political lines, I worry they undermine democracy at a time of rising totalitarianism. I hope EAR can continue to save lives, but transform its elitist epistemic and political pursuits into a democratic project that lifts all boats.